There was a quote that I remember a good samaritan saying to me, “However smart your devices and gadgets become, they can never do what humans can do in a way that humans can do.”

And I am sure everyone would have had someone say that to you. The point is, he wasn’t entirely wrong, but thinking in the context of cognitive computing, he wasn’t completely right either.

Yes, for ages computers have simply been advanced versions of calculators and typewriters. It could be said that the history of computers can be broadly divided into two parts.

One, where computers could only tabulate sums, and second, where we could create programs that could do various things, even dynamically.

Enter ‘Cognitive Computing’, the third era of computing.

Cognitive Computing is an attempt at making computers mimic the way the human brain works and processes thoughts.

The need for it arises from the fact that despite tremendous developments in computers, they cannot perform some tasks that humans easily can.

As MIT Media Lab co-founder Nicholas Negroponte has said, “Computing is not about computing anymore. It is about living.”

Let us have a clearer look at the same in the next section of this cognitive computing tutorial.

What Is Cognitive Computing?

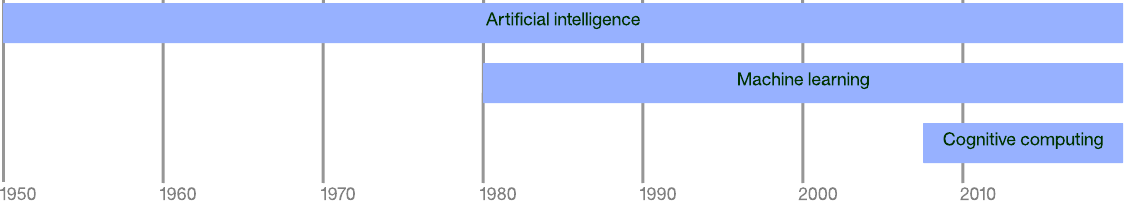

Cognitive computing is often used interchangeably with terms such as Artificial Intelligence, Machine Learning, Natural Language Processing, etc.

It is actually closely associated with all the above but is not the same. In comparison to AI at least, the concept of cognitive computing is relatively much newer.

Since the last two decades, computers have been doing incredible jobs. We have been able to build machines that could do almost everything humans can.

Almost! A few things, however, such as making sense of natural language or identifying a unique object in a picture, among other things, we’re still impossible for computers.

We will see a list of practical applications later, but let us see one example in detail for better understanding.

An Example Case

Cognitive computing examples are the best way to clearly understand the underlying idea. Imagine cognitive computing as applied to career consultancy.

A career consultant understands your existing qualifications, your strengths, likes, related and miscellaneous achievements, volunteering experience, etc.

At the same time, they would be also aware of current market trends, predictions about various sectors, etc.

The more they know about you, the better will be their advice.

It is not an impossible task, but it is certainly difficult if the goal is to provide the most accurate suggestions as it requires the input and study of a large amount of data and making calculations about the same.

For ages, computers were not able to do this, but with cognitive computing, machines can learn to collect and understand all the data and make sense of it to draw conclusions with even better precision than humans.

So, you can keep feeding all your personal data throughout your life or all at once and ask the machine to suggest the best field of work and role for you.

It can make use of everything from your academic grades to your entertainment interests. In the end, it provides you with an answer and keeps you in the decision making position.

Definition

It is difficult to precisely define or answer ‘what is Cognitive Computing’ (which is why we went for an example first), and there is no single accepted definition, but the following is more or less accepted:

“Cognitive computing (CC) describes technology platforms that, broadly speaking, are based on the scientific disciplines of artificial intelligence and signal processing. These platforms encompass machine learning, reasoning, natural language processing, speech recognition and vision (object recognition), human-computer interaction, dialogue, and narrative generation, among other technologies.”

IBM, one of the pioneers in the world of information technology has the following on their website in one of their blogs:

“Cognitive Computing is systems that learn at scale, reason with purpose. and interact with humans naturally. It is a mixture of computer science and cognitive science, i.e., the understanding of the human brain and how it works. By means of self-teaching algorithms that use data mining, visual recognition, and natural language processing, the computer is able to solve problems and thereby optimize human processes.”

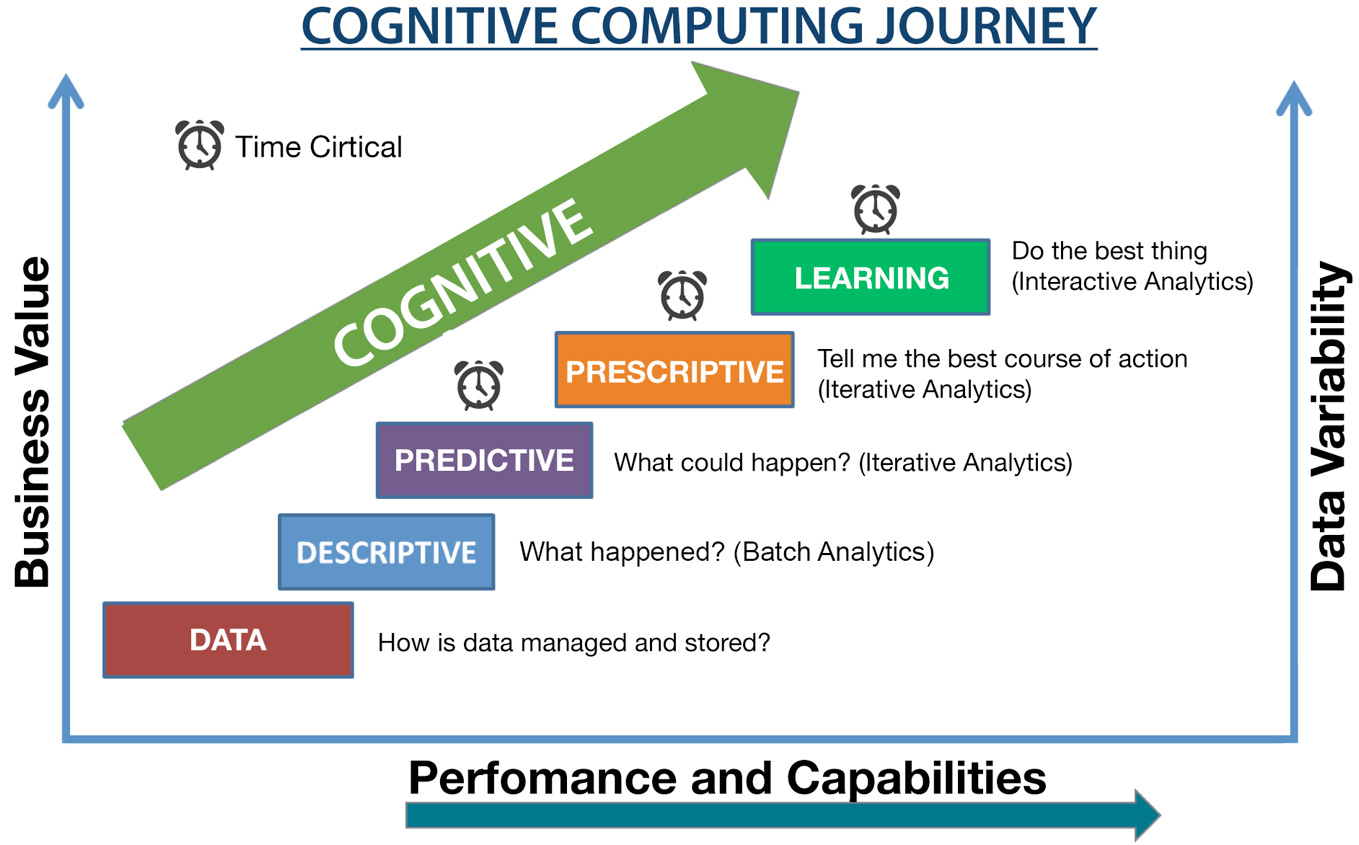

Check the following image by IBM that explains the cognition journey from simple data to learning and the steps in it in a simple manner:

And the following video can also help understand the concept even better.

https://www.youtube.com/watch?v=-8p3jf0t7w0

It can be said that cognitive computing aims to do the things only humans could do, while doing it faster and perhaps more accurately than humans, thus putting more power in the hands of humans employing this technology.

Key Attributes

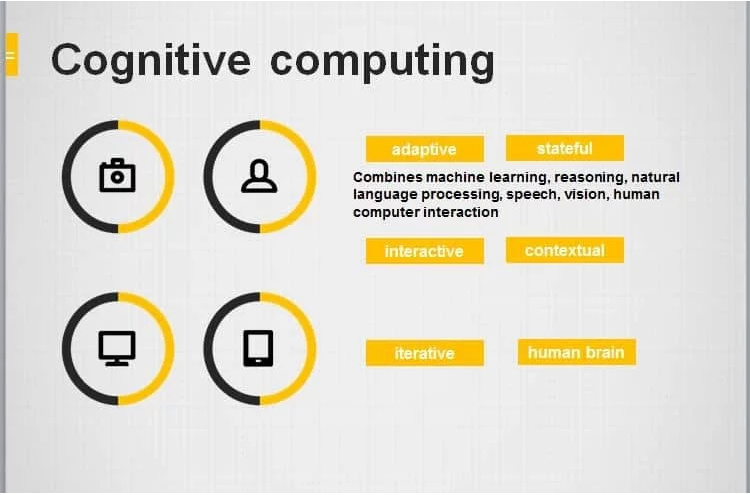

In order for a computing system to approach a problem as a human brain does, it has to have some important attributes as given below

(i) Adaptive

They should be tolerant to unpredictability and adapt as per changes in information or requirement. Systems can be designed to work in a dynamic manner after feeding on real-time data.

For example, suggesting deals and discounts on items in a supermarket based on the historical data, as well as the current data of customers, on a specific day in a specific section.

(ii) Interactive

A cognitive computer should allow easy interaction with the users. This is also where Natural Language Processing comes in. A user should be able to easily feed data and give commands as per what is to be derived from that data.

(iii) Iterative (and stateful)

They may have to repeat the processes on modified information or they may have to ask questions. Remember the previous stages (hence stateful) and keep making dynamic changes to the process of solution building.

(iv) Contextual

Like humans, they should be able to understand, identify, and extract contextual elements, such as time, location, syntax, user profile, and a huge variety of things in context to the base problem.

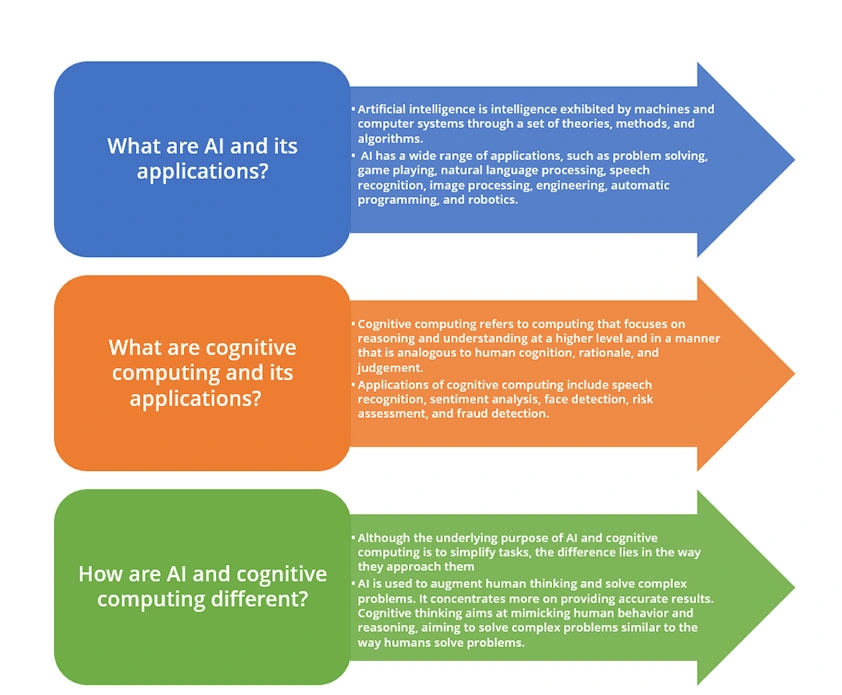

Cognitive Computing and AI

As stated before in this cognitive computing tutorial, CC and AI sound similar and are often confused to be the same thing. Although closely related, they are different in the context of the final solution each of them can provide.

Similarities

The similarities between AI and cognitive computing are obvious.

Both are technologies/platforms for super-smart machines that can do most human tasks much faster and with much more precision, along with also being able to do much more than humans can do.

These similarities often mean ‘what is cognitive computing’ gets the immediate answer ‘Artificial Intelligence’.

Both technologies include things, such as machine learning, natural language processing, etc. Some people also believe cognitive computing to be a subset of Artificial Intelligence.

Differences

The difference basically lies in what we extract from both cognitive computing and AI in the end. It is commonly accepted that as a major difference, AI can do everything that cognitive computing does, and in addition, can also make decisions.

Cognitive computing, on the other hand, focuses on mimicking the human brain to an extent of reasoning like humans and helping humans make better decisions with more clarity.

The differences are blurred and may change with time but we can at least say that both of these, either together or separately, make things far easier for humans.

Practical Applications of Cognitive Computing

(i) Education and Research

Instead of a single teacher handling a large bunch of students ineffectively, cognitive computing can be a personalized teacher/mentor per student.

It can remember every small and large detail about the student and his/her performance leading to a better selection of focus areas along with an array of other benefits.

It can aid in research by working on data in a far more swift and accurate way than humans can, especially because a large portion of research can often contain dealing with data.

(ii) Healthcare

Analyzing the patient history and current state by studying various parameters and deriving conclusions such as diagnosis, best treatment, etc. is one of the most widely stated examples of cognitive computing.

It can also help with preparing a personalized diet or nutrition plans as per a person’s medical history.

(iii) Finance

Cognitive systems can analyze the market in specific ways and guide regarding investments.

For example, it can analyze the history of a company’s shares in relation to external factors such as political, social, etc. This again.

(iv) Sentiment Analysis and Customer Experience

Forbes has stressed that customer experience is the new brand, and some have called it the modern battleground.

To improve customer experience, it is important to understand the sentiment of customers around your brand or product.

Cognitive computing can work with data that includes customer reviews, social media comments/posts, survey results. It can make sense of this data and pinpoint areas where changes are required.

Along with the above, there are many other practical cognitive computing examples, such as fraud detection, speech and voice recognition, etc.

Recent Developments in Cognitive Computing

Cognitive systems have become capable of doing most human activities, such as reading, writing, learning, etc.

They are looked upon as a key component of autonomous vehicles, one of the most hyped ongoing development of this century. They are also being steadily employed in prostheses, brain-machine interfaces, and robotic assistants.

Besides the existing applications mentioned in the above section, cognitive computing is slowly also finding applications in autonomous weapons, unmanned aircraft and intelligent large scale production systems.

Key Players in Cognitive Computing

Realising the potential of cognitive systems, most IT giants have already started and made significant developments in the area of cognitive systems.

Following is a list of companies and their cognitive computing sections:

Organisations have designed cognitive systems that allow writing a program with surprisingly fewer lines of code. They have also improved online threat detection by a large extent.

Using data science for leveraging deeper information leading to measurable actions is another development.

Conclusion

With some of the other possible application in almost every field, there is virtually no area of technology or business where cognitive computing cannot be applied. It has so far succeeded in making cognitive actions faster and more accurate than humans.

We are already not short of a number of cognitive computing examples in over half the areas of business. There is no doubt that as it grows, it will engulf every single remaining field too.

For this reason, it is important for everyone to understand the transformation our world is experiencing owing to cognitive computing, and we hope this comprehensive cognitive computing tutorial has helped you in that regard.

Enroll in the Data Science Course to elevate your career as a proficient data scientist.