Are you looking forward to learning Linear Algebra for Machine Learning?

Linear Algebra is a sub-field of mathematics concerned with vectors, matrices, and linear transforms. It is a key foundation to the field of Machine Learning. The operation includes working from notations used to describe the operation of algorithms to the implementation of algorithms in code.

In this post, we will look at Linear Algebra’s definition, its different examples and how it relates to vectors and matrices. Then we will proceed with vectors and matrices, and learn their implementation. We will include the knotty problem of eigenvalues and eigenvectors and learn how to solve those.

Further, we will learn about the interrelation between Linear Algebra and Machine Learning. The learning will be carried upon by some common examples of Machine Learning that you may be familiar with that are used and best understood using Linear Algebra.

In this post, I will delve deeper into Linear Algebra for Machine Learning. We will find out ways to improve skills and knowledge in Linear Algebra to learn more about Machine Learning. You will learn in-depth about multiple linear regression python.

Our discussion will further include linear regression Machine Learning python. Linear regression using python has become extremely among developers and this calls for an in-depth analysis of Linear Algebra.

What is Linear Algebra?

It is a sub-field of mathematics, primarily concerned with vectors, matrices, and linear transforms. Linear Algebra lays the basic premises for Machine Learning. Its working includes operations from notations used to describe the operation of algorithms to the implementation of algorithms in code.

Linear Algebra for Machine Learning

Machine Learning addresses the question of how to build computers that improve automatically through experience. It is one of today’s most rapidly growing technical fields. Machine Learning lies at the intersection of computer science and statistics, and at the core of artificial intelligence and data science.

The recent development in Machine Learning has been driven both by the development of new learning algorithms and its theory. It also includes the ongoing explosion in the availability of online data and low-cost computation.

The adoption of data-intensive machine-learning methods can be found throughout science, technology, and commerce. It leads to more evidence-based decision-making across many walks of life. These may include healthcare, manufacturing, education, financial modelling, policing, and marketing.

Linear Algebra for Machine Learning Examples

Some of the best examples of Linear Algebra include Dataset and Data Files, Images and Photographs, Linear Regression, Regularization, Deep Learning, Principal Component Analysis, and Singular-Value Decomposition.

1. Dataset and Data Files

This represents a table-like set of numbers. Here, each row represents an observation and each column represents a feature of the observation. Each row is of the same length.

Therefore, it may be inferred that the data is vectorized. Here, rows can be provided to a model one at a time or in a batch and the model can be pre-configured to expect rows of a fixed width.

2. Images and Photographs

Images or photos are the common instances of Linear Algebra for Machine Learning usage. Every image that you may need to work upon is itself a table structure. The width and height and one-pixel value is constituted in each cell for black and white images or 3-pixel values in each cell for a color image.

A photo is another classic example of a matrix from Linear Algebra. Photo editing activities such as cropping, scaling, shearing, and so on are all described using the notation and operations of Linear Algebra.

3. Regularization

It is another example of using Linear Algebra for Machine Learning. In applied Machine Learning, we prefer simpler models, since these are better at generalizing from specific examples to unseen data.

The methods may involve coefficients, such as regression methods and artificial neural networks, simpler models. These are often characterized by models that have smaller coefficient values.

Regularization is used to encourage a model to minimize the size of coefficients while it is being fit on data. Some of the common implementations include the L2 and L1 forms of regularization. Both forms of regularization are a measure of the magnitude or length of the coefficients as a vector. These are the methods lifted directly from Linear Algebra called the vector norm.

4. Deep Learning

Deep learning has seen a recent spurt in the use of artificial neural networks with newer methods and faster hardware that allow for the development and training of larger and deeper (more layers) networks on very large datasets.

Its methods are routinely achieving state-of-the-art results on a range of challenging problems such as machine translation, photo captioning, speech recognition, and much more.

The execution of neural networks involves Linear Algebra data structures multiplied and added together. Scaled up to multiple dimensions, deep learning methods work with vectors, matrices, and even tensors of inputs and coefficients, where a tensor is a matrix with more than two dimensions.

Linear Algebra is pivotal to the description of Deep Learning methods via matrix notation to the implementation of deep learning methods such as Google’s TensorFlow Python library.

Linear Regression

What is Linear Regression?

It is a statistical model that examines the linear relationship between two (Simple Linear Regression) or more (Multiple Linear Regression) variables — a dependent variable and independent variable(s). In Linear relationship with one (or more) independent variables increase (or decrease), the dependent variable increases (or decreases) as well.

The linear relationship can be positive (independent variable goes up, the dependent variable goes up) or negative (independent variable goes up, the dependent variable goes down). Overall idea of regression is to examine two things:

(1) Does a set of predictor variables do a good job in predicting an outcome (dependent) variable? (2) Which variables, in particular, are significant predictors of the outcome variable. In what way do they–indicated by the magnitude and sign of the beta estimates–impact the outcome variable?

These regression estimates are used to explain the relationship between one dependent variable and one or more independent variables.

The simplest form of the regression equation with one dependent and one independent variable is defined by the formula y = c + b*x, where y = estimated dependent variable score, c = constant, b = regression coefficient, and x = score on the independent variable.

Simple Linear Regression

Simple linear regression is required for finding the relationship between two continuous variables. One is a predictor or independent variable and the other is the response or dependent variable. It looks for a statistical relationship but a not deterministic relationship.

The relationship between the two variables is said to be deterministic if one variable can be accurately expressed by the other.

For example, using temperature in degree Celsius it is possible to accurately predict Fahrenheit. The statistical relationship is not accurate in determining the relationship between two variables.

For example the relationship between height and weight.

Multiple Linear Regression

The aim of multiple linear regression is modelling the relationship between two or more features and a response by fitting a linear equation to observed data. It is an extension of Simple Linear Regression.

Linear Regression using Python

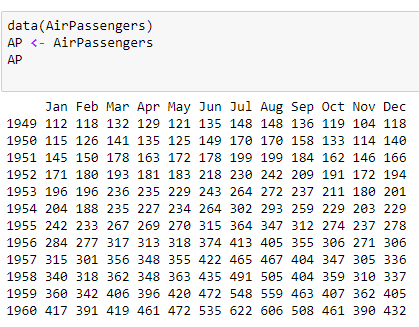

The Linear Regression in Python can be performed in two ways: Statsmodels and sci-kit-learn. Python code of Linear Regression is becoming increasingly popular.

(a) Linear Regression using Python: Statsmodels

Statsmodels may be defined as a Python module that provides classes and functions for the estimation of many different statistical models. They also help in conducting statistical tests, and statistical data exploration.

Simplest way to get or install Statsmodels is through the Anaconda package. After installing it, you will need to import it every time you want to use it.

You can perform both single and Multiple Linear Regression in Statsmodels. There is the option of using as little or as many variables you want in your regression model.

(b) Linear Regression using Python: SKLearn

SKLearn has many learning algorithms, for regression, classification, and clustering and dimensionality reduction. You may use the entire dataset, even though a long and tedious process, or break up your data into a training data to train your model on, and test data.

Have a look at the Linear Regression examples in Python.

https://www.youtube.com/watch?v=QGIXYbjhHws

You may learn more about linear regression python code by reading blogs, discussions, and watching video tutorials.

If you want to excel as a coder or an expert in Linear Algebra for Machine Learning, you should have a strong background in mathematics. You will need to have a thorough knowledge of vectors, matrices and how to apply these to solve linear systems of equations. Further, you must know how to apply these to computational problems

In this post, we have taken a look at Linear Algebra and the vital role it plays in Machine Learning. We looked at different techniques of learning linear regression using python. You may go for a refresher, crash course or a deeper video course for a better understanding.

Closing Thoughts

I hope this has sparked your interest in Linear Algebra for Machine Learning. You may look up for courseware or high-quality resources on Linear Algebra for Machine Learning.

Mastering Python for linear regression will prepare you better for a rewarding career in Python. Tremendous growth, enormous learning, and lucrative salary are some of the well-known perks of a promising career in Python. Add to that the magic touch of a Data Analytics course, and you are ready to rock!

Python career also offers diversity in terms of career choices. One can start off as a developer or programmer and later switch to the role of a data scientist.

With a substantial amount of experience and Python online course certification, one can become a certified trainer in Python or an entrepreneur. But the bottom line remains the same. Read this to gain more insights into career opportunities in Python.

Digital Vidya offers one of the best-known Data Science Courses for a promising career in Data Science using Python. The industry-relevant curriculum, pragmatic market-ready approach and hands-on Capstone Project are some of the best reasons for choosing it

In addition, students also get lifetime access to online course matter, 24×7 faculty support, expert advice from industry stalwarts. Last but not the least, you get assured placement support that prepares you better for the vastly expanding Data Science market.