A matrix, as you learned in mathematics and in C programming, is a 2-dimensional array. A 2-dimensional array in programming is often represented by ‘m’ rows and ‘n’ columns, written as m x n matrix. A data element held in each slot of a matrix is called matrices.

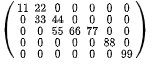

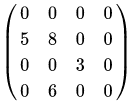

In programming (in mathematics, and in general), a matrix that has more zero matrices than non-zero matrices, is called a Sparse Matrix. For example:

To understand what is sparse matrix, consider the above matrix for example. It contains 5 rows and 7 columns, with the possibility to hold 35 matrices or elements.

Of these 35 matrices, only 9 are non-zero elements while 26 are zero. This is an example of a sparse matrix, with 74% sparsity (26/35) and 26% (9/35) density.

The opposite of a sparse matrix is one that has more non-zero elements than zero elements and is called a dense matrix.

Now that you know what is sparse matrix, you may wonder, ‘what is the point of having a sparse matrix in the first place’, it turns out, according to Introduction to Linear Algebra, Fifth Edition, 2016, that In practice, most large matrices are sparse – almost all entries are zeros’.

If there are 100,000 words in the language model, then the feature vector has length 100,000, but for a short email message almost all the features will have count zero.

-Artificial Intelligence: A Modern Approach, Third Edition, 2009

Memory Drawbacks of a Sparse Matrix

Every element of a program array takes up memory and a sparse matrix can end up taking unnecessary amounts of memory space. For example, a 10×10 array can occupy 10 x 10 x 1 byte (assuming 1 byte per element) = 100 bytes. If a majority of these elements say 70 of 100, are zeroes, then 70 bytes of space is essentially wasted. Sometimes large sparse matrices are too big to fit into memory.

Computational Drawbacks of a Sparse Matrix

Performing algorithmic computations (like matrix multiplication, for example) takes up a lot of unnecessary time for each zero computation. Anything multiplied by zero is zero, but this operation still has to be performed which is seen as a waste of computational time.

A sparse matrix can be compressed and memory reduction can be achieved by storing only the non-zero elements. However, this will also require programming additional structures to recover the original matrix when elements have to be accessed, but overall a compressed sparse matrix can ultimately increase computational speed.

Sparse matrices are very common in machine learning algorithms. Now that your question ‘what is sparse matrix’ is answered, let’s understand some examples and its uses.

Here’s an interesting tutorial to identify a sparse matrix in c:

Sparse Matrix Occurrence Examples in Programming & Machine Learning

As mentioned earlier, sparse matrices are a common occurrence in Machine Learning algorithms.

1. In Data Storage

Activity count arrays often end up being a sparse matrix. For example:

(a) In a movie application like Netflix, the array that stores the check of which movies are watched and not watched in a catalog.

(b) In e-commerce programs, data that represents the products purchased and not purchased by a user.

(c) In a music app, the count of songs listened and not listened to by a user.

2. In Data Preparation

Sparse matrices are often seen in encoding schemes, which are used for data preparation. Examples:

(a) One-hot encoding, which is used to represent categorical data as sparse binary vectors.

(b) Count encoding, which is used in the representation of the frequency of words in a document.

(c) TF-IDF encoding, which is used in representing frequency scores of words in the vocabulary.

3. Machine Learning Study Areas

Sometimes, machine learning study areas require the development of specialized methods to address sparse matrices as input data. Examples are:

(a) Natural Language Processing when working with text documents

(b) Recommendation systems for product catalog programs.

(c) In Computer Vision when scanned images have a lot of dark or black pixels.

Different Methods of Sparse Matrix Representation & Compression

Storing a sparse matrix as is takes up unnecessary space and increases computational time. There are ways for sparse matrix representation in a ‘compressed’ format, which improves its efficiency.

NOTE: In all these examples, the rows and columns start with 0. So a 4×3 matrix has rows 0 1 2 3 and columns 0 1 2.

1. Dictionary of Keys

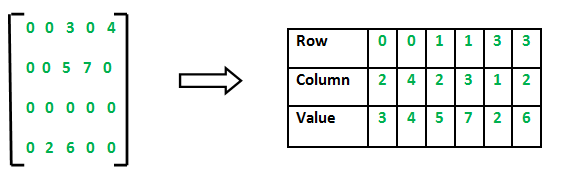

In this method, a simple dictionary is created where a row and column index is mapped to non-zero values. Every other remaining value is zero. This is a popular method for sparse matrix representation.

Example:

You can code the conversion of above Sparse matrix in c++ this way:

// C++ program for Sparse Matrix Representation // using Array #include<stdio.h> int main() { // Assume 4x5 sparse matrix int sparseMatrix[4][5] = { {0 , 0 , 3 , 0 , 4 }, {0 , 0 , 5 , 7 , 0 }, {0 , 0 , 0 , 0 , 0 }, {0 , 2 , 6 , 0 , 0 } }; int size = 0; for (int i = 0; i < 4; i++) for (int j = 0; j < 5; j++) if (sparseMatrix[i][j] != 0) size++; // number of columns in compactMatrix (size) must be // equal to number of non - zero elements in // sparseMatrix int compactMatrix[3][size]; // Making of new matrix int k = 0; for (int i = 0; i < 4; i++) for (int j = 0; j < 5; j++) if (sparseMatrix[i][j] != 0) { compactMatrix[0][k] = i; compactMatrix[1][k] = j; compactMatrix[2][k] = sparseMatrix[i][j]; k++; } for (int i=0; i<3; i++) { for (int j=0; j<size; j++) printf("%d ", compactMatrix[i][j]); printf("\n"); } return 0; }

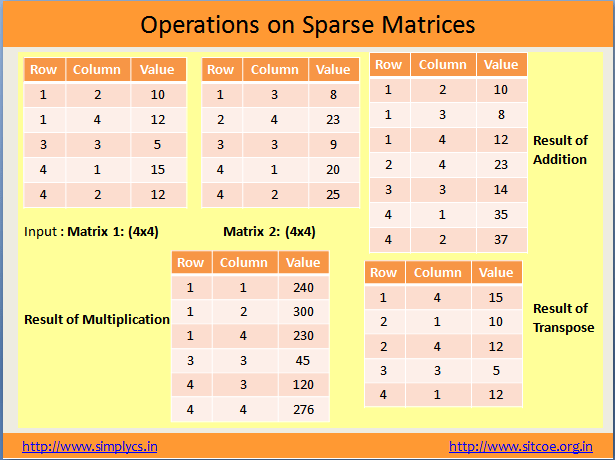

Here is an example of addition and multiplication operations for sparse matrix in data structure converted by the dictionary of keys method:

2. List of Lists

In this method of sparse matrix representation, each matrix row is stored as a list with a separate sublist that stores the column index and non-zero value.

3. Coordinate List

In this method of sparse matrix representation, a list of tuples is created where each tuple stores the row index, column index, and the non-zero value.

Methods of Sparse Matrix Representation

The following two methods of sparse matrix representation are most commonly used for the sparse matrix in the data structure. These methods provide increased computational speeds for more efficient operations.

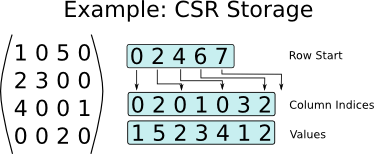

1. Compressed Sparse Row (CSR, CRS or also known as Yale format)

In this method, the sparse matrix is represented by 3 arrays, one holding the non-zero values, one representing the row mapping and one representing the column mapping. The following example will better illustrate how it is used.

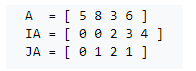

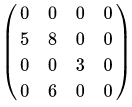

Consider the sparse matrix shown in the above example. It is a 4×4 matrix with 4 non-zero elements and 12 zero elements. The CSR representation of this matrix will be:

In the above CSR representation

A = non-zero elements of the matrix

IA = Row mapping

JA = Column mapping

The CSR arrays are created in the following method:

(i) The non-zero elements are depicted in an array of one row (array ‘A’ in the above image), by checking for non-zero elements in row order (going ahead from row 0).

(ii) The row mapping array (array ‘IA’ in the above image) always starts with a 0. Every subsequent value is the increment of the number of non-zero elements, row-wise. In our example:

- By rule, the first value is 0

- Row 0 has no non-zero elements so next value is 0+0=0

- Row 1 has 2 non-zero elements so the next value is 0+2=2

- Row 2 has 1 non-zero element so the next value is 2+1=3

- Row 3 has 1 non-zero element so the next value is 3+1=4

- The final row mapping is [0 0 2 3 4]

(iii) The column mapping array (array ‘JA’ in the above image) stores the column value of each non-zero element.

- First non-zero element (5) is in column 0

- Second non-zero element (8) is in column 1

- Third non-zero element (3) is in column 2

- Fourth non-zero element (6) is in column 1

- Final column mapping array is [0 1 2 1]

The final CSR arrays have a total of 13 elements as opposed to 16 elements in the parent matrix. Computational algorithms (like addition, multiplication) can be implemented directly to the CSR arrays to obtain results.

Another example to help you understand better:

2. Compressed sparse column (CSC or CCS)

CSC is a sparse matrix representation similar to CSR, but where the column values are compressed as indices and read first after which the row indices are read.

If we consider the same example matrix:

The CSC solution will be:

(i) Value array (created by taking non-zero elements column-wise top-down then left to right): [5 8 6 3]

(ii) Row Index (derived the same as the same way as column index in CPR): [1 1 3 2]

(iii) Column Pointer (derived same as the same way as row pointer in CPR): [0 1 3 4 4]

The CSC arrays are created in the following method:

(i) The non-zero elements are depicted in an array of one row (Value Array mentioned above), by checking for non-zero elements in column order (going down from column 0, then each column left to right)

(ii) The row mapping array (Row Index mentioned above) stores the row value of each non-zero element. In our example:

- First non-zero element (5) is in row 1

- Second non-zero element (8) is in row 1

- Third non-zero element (6) is in row 3

- Fourth non-zero element (3) is in row 2

- Final Row index is [1 1 3 2]

(iii) The column mapping array (Column Pointer mentioned above) always starts with a 0. Every subsequent value is the increment of the number of non-zero elements, column-wise. In our example:

- By rule, the first value is 0

- Row 0 has no non-zero elements so next value is 0+0=0

- Row 1 has 2 non-zero elements so the next value is 0+2=2

- Row 2 has 1 non-zero element so the next value is 2+1=3

- Row 3 has 1 non-zero element so the next value is 3+1=4

- The final row mapping is [0 0 2 3 4]

CSC method of the sparse matrix in the data structure is food for column slicing, arithmetic operations, matrix-vector products, and is the preferred format for specifying a sparse matrix in MATLAB.

The Outcome of Sparse Matrix Compression

We’ve understood what sparse matrices are, learned that they are very common in fields like machine learning, seen an example for sparse matrix in c, the sparse matrix in the data structure, and learned that they can be compressed for better memory utilization and better efficiency.

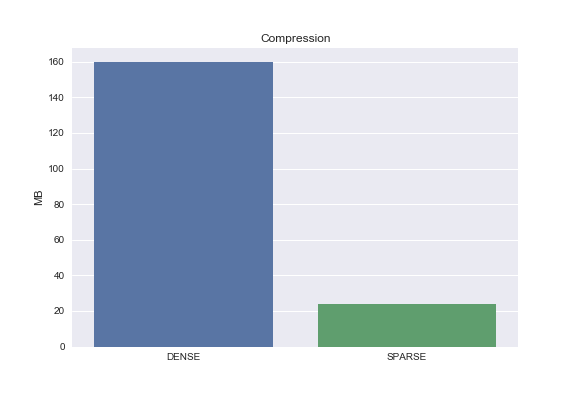

To give you a sense of how much a compression method like CSR can affect a sparse matrix, consider the following example.

First, we generate a sparse matrix:

import numpy as np np.random.seed(seed=12) ## for reproducibility dataset = np.random.binomial(1, 0.1, 20000000).reshape(2000,10000) ## dummy data y = np.random.binomial(1, 0.5, 2000) ## dummy target variable

Next, we use a CSR algorithm in Scipy to compress our generated sparse matrix ‘dataset’

from scipy.sparse import csr_matrix sparse_dataset = csr_matrix(dataset)

import seaborn as sns dense_size = np.array(dataset).nbytes/1e6 sparse_size = (sparse_dataset.data.nbytes + sparse_dataset.indptr.nbytes + sparse_dataset.indices.nbytes)/1e6 sns.barplot(['DENSE', 'SPARSE'], [dense_size, sparse_size]) plt.ylabel('MB') plt.title('Compression')

Comparison of memory occupation before and after CSR compression:

As you can see from the image above, the matrix before CSR compression (indicated as ‘dense’ in the image) occupied 160MB space, which got reduced to a little over 20MB.

Summarizing Sparse Matrices

A sparse matrix is a 2-dimensional matrix of ‘m’ rows and ‘n’ columns, and a total of m x n elements where the majority of elements are zero.

Sparse matrices commonly occur in fields like machine learning and are most often too large in size to store. The large number of zero elements also results in arithmetic operations that can be avoided, wasting computational time.

Both these drawbacks, of memory and computational inefficiency, can be solved by ‘compressing’ the sparse matrix using one of many methods.

Compressed sparse matrices take incredibly space and can be used for fast computation.

Tee are many libraries that support sparse matrices which can be used in programming languages like Python Here are some open-source examples:

- SuiteSparse

- ALGLIB

- PETSc – popular for sparse matrix in c

- Eigen3

- MUMPS

- PaStix

- SuperLU

- Armadillo

- SciPy

- SPArse Matrix (spam) R package

Conclusion

Wide sparse matrices are common in general and in particular in applied machine learning, such as data containing counts, data encoding mapping categories to count, and even entire machine learning subfields such as natural language processing.

You should enroll in a Data Science Course to get the right data science skillset. This will help you lift your Data Scientist career.