Everyday thousands of web pages are being added to the world’s information highway-world wide web (www). Search Engines can be construed as the gateway for the web searchers who seek myriad of information from the World Wide Web. Search Engines thus face daunting task of matching the web pages they retrieve with the searcher’s intent. Web crawlers are the software programme and can’t analyze the web page as the human see it. It performs some algorithmic operations in mathematical precision in information retrieval process. SEO professionals tweak those operations in such way that the Web Crawlers find SEO website easy to crawl and extremely relevant to the searcher’s intent. SEO is the process of manipulating the internal structure and external environment of the website in such a way so as to get high ranking in the organic search.

SEO is two stage processes. Firstly it incorporates the factors that make a website or webpage crawlable and as such indexable. In the non technical terms these factors make the website visible to the web crawler. Secondly, website’s internal structure or external environment is improved to increase the relevancy and importance of the website or page before Search Engines. In this stage Search Engine finds the web page most relevant to the search query.

First stage in the SEO process- making the website crawlable

The SEO professionals should incorporate following SEO practices in order to make website visible to the crawler.

1. Indexable content

Entire content of the website must be written in HTML code. Content created in image file, Flash file, Java console or Ajax platform can’t be crawled by the Search Engines.

2. Internal Link Structure

All the pages must be linked to the top level page (Home Page) so that search engine can crawl the furthest web pages.

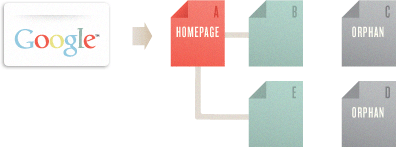

Diagram 1: Page B & C are internally connected to Home Page. Page C & D becomes orphan as they can’t be crawled by the Search Engine.

3. Website Navigational Structure

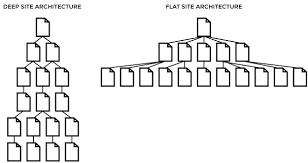

Any visitor when arrives at the website through search engine remains really confused. You should formulate Navigational structures in such way that the visitor can get familiar with the website in shortest possible time. This will enhance engagement parameters.Always stick to Flat Structure. It is always advisable to have furthest web page 2-3 click away from top level web page.

Diagram 2: Flat structure vs Deep structure- Visitors are served better in Flat web structure

4. Sitemap

Sitemap is the file which tells the search engine list of all web pages of the website. It helps the crawler to find all the web page better. It also tells the search engine when the web pages are last updated, or changed and the future changes. All the major search engines including GOOGLE accept sitemap in xml file format. Below mentioned code is the standard xml sitemap format.

<url>

<loc>http://www.example.com/mypage</loc>

<lastmod>2013-10-10</lastmod>

<changefreq>monthly</changefreq>

<priority>1</priority>

</url>

5. Leveraging robots.txt file- Denial of the Search Engines’ Crawling Process

This is a simple text file located in the root domain (www.yourdomain.com/robots.txt). It’s a very versatile tool through which webmaster can control the search engines’ crawling of specific web page or content. You can employ it to-

(a) Deny the scanning of non-public information

(b) Avoid the indexation of duplicate content

(c) Block the crawling of specific scripts, codes, utilities.

(d) Deny auto discovery of xml sitemap.

robots.txt file- A sample:

User-agent: *

Disallow: /

6. Adherence To Clean IP Policy

The search engines for the years have been suspecting certain servers, hosts, or the block of domains (IP Address) as being associated with spamming. It is better to investigate track record of servers, hosts or the domains they offer beforehand in order to avoid the later penalties from the search engines.

Second stage of SEO- enhancing the search visibility through internal improvement and link authority

Now the internal link building (website architecture) having been in place, You must peep into some parameters of all the pages (at the most few important pages) of the website better search visibility. The operations fall broadly into two groups- the manipulation of the internal parameters is referred as On-page SEO and that of external environments (of the page) so as to garner authoritative links is Off-page SEO.

On-page SEO- Tweaking The Internal Environment

In nutshell it encompasses the broad factors like-

- Domain level keyword & agnostic features

- Keyword targeting.

- Unique & value added content

7. Domain Level Keyword And Agnostic Features

- Use of focused keyword in the domain name

- Domain name must be unique. It shouldn’t have any resemblance with the popular or competitive domain.

- Domain name must be kept short and it must be easy to remember.

- Use “-“ (hyphen) to separate multiple words in the URL.

- Search Engines prefer Domain with Long Registration History over yearly registered Domain.

8. Keyword targeting

It is often misunderstood as the only part of the SEO strategy, but it’s not so. The process begins with identifying a few focused keywords relevant to the business profile or the content which the publisher wants to offer. There are various free keyword research tool- the most popular among them is Google Adword tool, basically meant for PPC campaign.

But nonetheless, the keywords, the tool returns work perfectly well in organic search environment. Afterwards, those keywords are placed in the important places of the webpage. It is well documented that the search engine, for understanding topical relevance, places importance on analyzing the presence of focused keywords in HTML Meta tags, header tags and content itself.

(a) <title> tag-

- Should contain focused keyword, preferably at the beginning. Each page should contain unique title.

- Should offer clarity about what the visitors might expect from the page.

- Target long-tail keywords if they are relevant.

- Try to limit title within 50 characters.

- Always use static domain.

Standard <title> tag format:

DOCTYPE html>

<!–[if lt IE 9]> <html class=”oldie”> <![endif]–>

<!–[if gt IE 10]><!–> <html lang=”en-US”> <!–<![endif]–>

<head>

<title>………………..</title>

(b) Meta Description tag-

Importance of the optimization of the Meta description has lost its relevance because of over optimization. However Meta Description is displayed in the search results just beneath the title and page url. Succinctly written Meta Description can draw the visitors and enhance CTR. It thus indirectly improves the page ranking.

- Always tell the truth.

- Keep it succinct- word limit is 150 characters

- Write the description as an ad copy.

- Each page should contain unique description.

Standard Meta Description Format:

<meta name=”description” content=”…………….” />

Tips: Duplicate title tag & Meta Description tag is looked down upon by the search engines.

(c) Header tag-Use focused keyword at least in <h1> tag preferably at the beginning.

(d) Image <alt> tag– Load the focused keyword, as preferably as possible in the image <alt> tag.

(e) In the content itself- Use focused keyword in the content or at the best in the first paragraph of the content. Excessive stuffing of keyword can kill the natural flow of the content. Keyword cannibalism receives poor rating from the search engines.

9. Unique and Value added content-

Appearance in the top position in the organic listing depends upon two factors- relevancy and importance. The search engines rewards unique and value added content and these two elements go into making a page relevant and important both for visitors and search engines. The 1st critical element in this is to avoid creating poor content.

Three forms of poor content exist-

- thin content-Creating Page after page with very small content.

- thin slicing- creating page for each variations of a perticular product

- Duplicate Content- identical or nearly identical content exist on two different page of the same domain or on two different domain (plagiarism).

Unintentional duplicate content creation may happen due to-

- existence of identical content on two different URLs which differ in session id or cookies.

- content exists on the URL generated due to search queries- in case of large E-commerce site and that URL later gets linked into some other content.

- Contents exist on both http:// & https:// versions.

You may tackle such problems by using rel=”canonical” tag & robots meta tag.

Canonical tag- tells the search engines which version of the pages will be shown to the searchers.

Code Sample : <link rel=”canonical” href=”http://example.com/blog” />

Robots meta tag is small piece of HTML code when pasted in the page source code which instruct the web crawler not to perform specified operation.

- “noindex”- Not to index the page

- “nofollow”-Not to follow the link/ Not to pass the link value

- “noarchive”- prevent the cached copy of the webpage available in search result

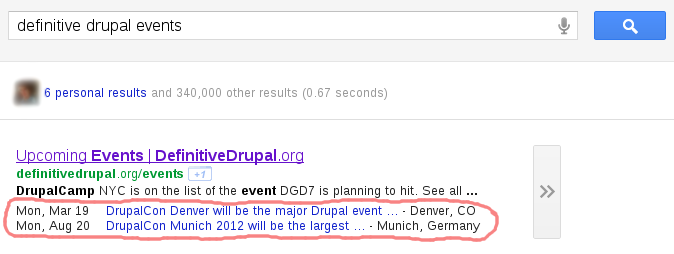

Schema.org: Another content mark up feature which was recently introduced by the Google, Bing and Yandex is Schema.org. It’s a HTML code protocol that marks up rich snippet culled from the content and return it in organic search page. The protocol enhances relevancy, importance and trustworthiness and thus indirectly improves page rank.

10. Off-page SEO- Manipulating External Environment For Garnering Quality Back-link

Apart from relevancy and importance, the other most crucial factor that determines the page ranking is the authority or the online reputation the webpage commands. The search engines determine the page authority through link analysis.

You should follow following off-page SEO practices-

- Popularize the content in the social media specially in FB, Twitter, Google+, LinkedIn, Pinterest.

- Publish a guest post in the popular article publishing sites like Ezine and build link back to your content through anchor text.

- Editorial Linking- great content often get linked to other similar content. This is very natural process..

- Link exchange- exchange link with the service related websites which can help popularizing the webpage.

- Link baiting- link the other’s content in your post and request them to do so. The content needs to be trustworthy.

- Content Syndication- linking the content of other web page in return of reciprocal Linking.

- Directory submission- submit the website in popular directories like DMOZ, Yahoo Directories etc.

However, after penguin update of the Google, LAST FOUR OPTIONS have become ineffective for authoritative back-link building.

Technical SEO Factors

- Site load speed is important SEO factor

- Keep page cache enabled

- Use compression for faster page load

- Ideal page load speed is 2-2.5 seconds.

The Last Word-

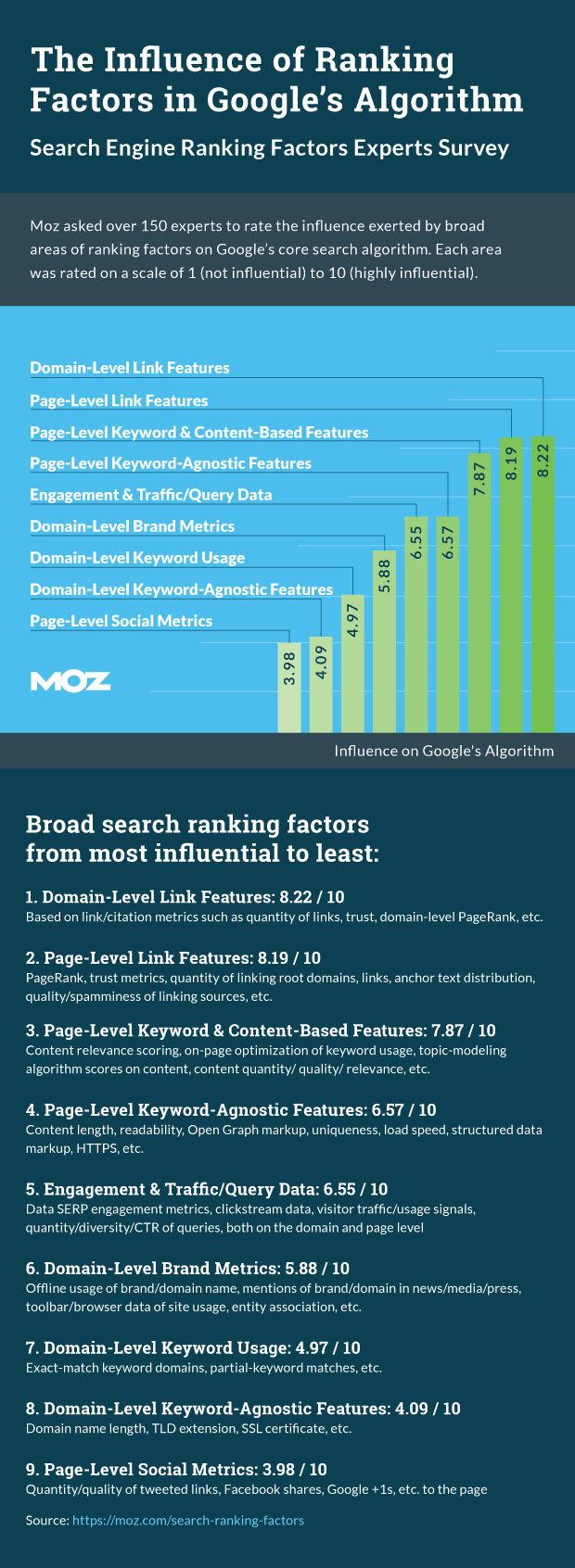

The Moz in 2015, surveyed 150 SEO professional and came up with 90 prominent ranking factors which are grouped as:

Source: Moz

Dear digitalvidya,

This is a very nice post for seo’s…

I want to know how to allow search engine to robots.txt can you give me code ?

robots.txt file is used to control the accessing of a web page by a web crawler.

Allow everything of your website to be crawled: User-agent: *

Allow: /

Alternately, you can deny crawling of a particular web-page but allow accessing of certain files

within that folder

Example 1:

User-agent: *

Disallow: /folder/

Allow: /folder/file1.html

Example 2:

User-agent: *

Allow: / *?$

Disallow: / *?