Data Science is the extraction or derivation of insights from massive volumes of refined or unrefined data. Sampling is a technique used (in data science and other fields) to create subsets of smaller data sets, to study it and apply its inferences on the entire volume of data. Using sampling techniques to derive insights drastically reduces the time and cost for analysis, as compared to analyzing the entire volume of data as a whole.

The rapid growth in technology has fueled the growth of companies, leading to an increased amount of data being produced. Every organization creates huge volumes of data on a daily basis, which is essentially useless unless analyzed.

Analyzing data becomes a time-consuming process as the volume of data increases, and this is where data sampling techniques are implemented.

The most common use of data sampling, however, is in research, where samples consist of people (while the collected output is still data). Whatever the purpose, the methods of sampling techniques and execution remain the same.

Opinion polls that are held before the actual voting day is one of the oldest examples of sampling? A small population is asked who they are going to vote for, and this result is projected as a possible outcome of the actual vote.

Data sampling allows researchers and analysts to arrive at conclusions faster by saving time on the data collection and analysis steps of the research process.

Consider cancer research in healthcare, for example. By using a sample of 100 patients (rather than the entire population of cancer patients as a whole) to study the success rate of a particular medication on cancer cells, medical researchers are able to come to a conclusion faster. The results then positively impact all cancer patients in the world.

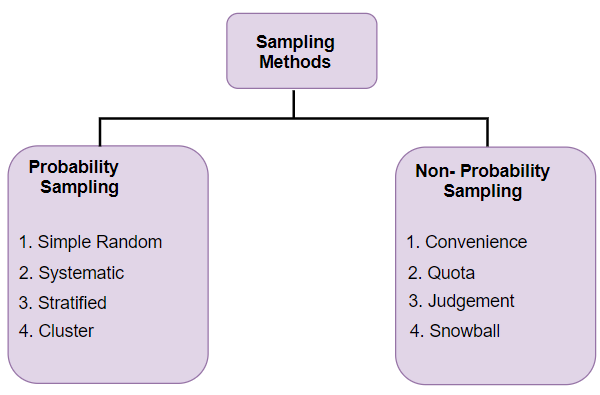

Classifications of Sampling Techniques

(i) Probability Sampling – These techniques select data subsets at complete random.

(ii) Non-probability Sampling – There is some element of judgment, decision, or process used to select subsets from the overall sample population.

|

Probability Sampling Methods |

Non-Probability Sampling Methods |

|

|

Definition |

Probability sampling techniques are methods where data subsets are selected at random. |

Non- probability sampling techniques are methods where some judgment or involvement or the researcher is involved in data subset selection. |

|

Other names |

Random sampling technique. |

Non-random sampling technique. |

|

Method of population selection |

Random |

Non-random |

|

Type of research |

Often used in conclusive research. |

Often used in exploratory research. |

|

Sample quality |

Since the sample is randomly selected, the chances of it representing the entire population is high. |

Since the sample is not randomly selected, the chances of it representing the entire population is lower compared to probability techniques. |

|

Time for research |

It can take longer than non-probability techniques. |

It is comparatively faster. |

|

Results |

Results are often conclusive |

Results are often speculative. |

Types of Sampling Techniques

There are 13 types of sampling techniques that are used:

1. Simple Random Sampling

Simple random sampling, as the name suggests, involves the random picking of data items from a sample to form a subset. Every item within the sampling frame has an equal probability of being picked for the sampling subset.

This is one of the most commonly used random sampling techniques and the most popular types of sampling techniques. Picking samples at random increases the chances of the final sibset being an accurate representation of the larger population as a whole.

2. Systematic Sampling

Also called interval sampling, systematic sampling involves the arranging of the sample population based on some predefined order scheme, and then selecting data items at regular items to form the sampling subset.

The starting point of selection is picked at random (and should never be the first item in the sample), and selection then proceeds in order. This is a type of probability random sampling technique.

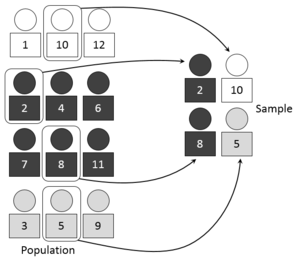

3. Stratified Sampling

When the study sample (or collected data) consists of numerous categories, the sample can be grouped based on categories, called ‘strata’.

Items are then randomly picked out of each ‘stratum’ to form the final sampling subset. This technique is useful when there are a large number of classifications and categories in the base population pool.

4. Probability-Proportional-to-Size Sampling

PPS is a method for sampling a finite set of data where a size measure is available for each data unit. When data sets of different sizes are presented, samplers calculate a size measure that is proportional to the size of each sample and select random data items from each sample in proportion to the size measure, to maintain equal probability.

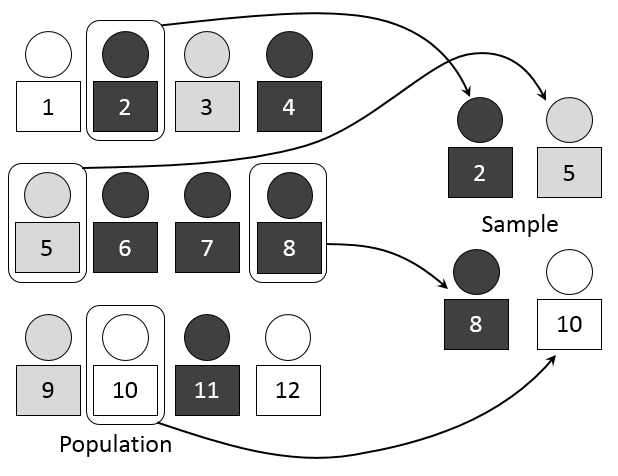

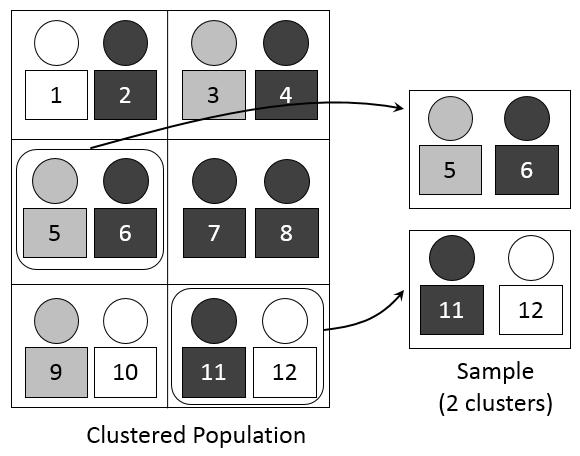

5. Cluster Sampling

Clustering is one of the types of sampling techniques where data is first grouped into clusters based on some similarity, and then random clusters are selected to form the sampling subset.

For example, instead of picking houses at random for interviews, houses are grouped by locality, and random localities are selected into the subset. Every house within the selected locality is then interviewed.

6. Quota Sampling

In quota sampling, data items are first grouped based on similarity, just as in stratified sampling, and then data items are selected based on preselected criteria to form the sampling subset.

For example, population data is grouped based on age and gender, and then the subset is formed by defining criteria, like ‘100 men and 150 women between the ages of 25 and 35’.This is an example of non-probability sampling techniques, which means it is opposite from random sampling techniques.

7. Minimax Sampling

Minimax sampling is used in artificial intelligence, decision theory, game theory, statistics and philosophy in order to reduce the chances of loss for a worst-case scenario.

8. Accidental Sampling

Accidental sampling is a technique where subsets are selected from a data sample that is currently at hand, rather than waiting for larger complete samples. This is an example of non-probability sampling techniques.

9. Voluntary Sampling

Voluntary sampling is a non-probability sampling technique where volunteers provide subset data, and it is not randomly picked. This is one type of non-probability sampling techniques.

10. Line-Intercept Sampling

This is a sampling technique where a data item is chosen for the subset if a pre-decided line segment, called a ‘transect’, intersects that element.

11. Panel Sampling

Just as a focus group, in panel sampling, a group of participants is selected at random and then interviewed multiple times (mostly the same questions).

12. Snowball Sampling

This technique involves selecting an initial group of volunteers and then having these volunteers recruit more members.

13. Theoretical Sampling

Theoretical sampling is a method where data results are used to select samples in order to understand data items further.

Here’s a video that succinctly summarizes the different sampling techniques:

A quick classification of the techniques into probability or non-probability methods:

Use of Sampling in Different Fields

1. Healthcare Sector

Data analytics applications is an important aspect of healthcare, where insights derived from collected data help the medical community find breakthroughs for diseases.

Progress is extremely crucial in the healthcare sector in order to continually find cures and prevention methods, and sampling techniques in research methodology help with this data analysis.

Clinical trials are one way of finding medical solutions, and clinical research is always performed by first using sampling methods in order to define a target focus group.

Probability sampling (random sampling techniques) is a preferred sampling technique for clinical trials in order to get a good mix of unbiased data sets. The initial sample for clinical trials is always on a volunteer basis, from which random individuals are selected for the subset.

Another area of healthcare where sampling techniques are used is in predicting the probability of disease outbreaks. Studying health conditions on a geographic, ethnic, gender, and other similar levels help medical officials predict possible outbreaks of diseases.

Cluster sampling is the preferred technique for this purpose by dividing samples into clusters based on geography, ethnicity, etc.

A medical study for particular conditions is carried out by using cohort or panel sampling. A subset of random selections are made from individuals with a common medical condition and then analyzed over time to understand the changes in the condition over time.

Panel sampling is also used in medical research to study the impact of predefined factors on health. For example, to understand the medical impact of living in extremely hot regions, a panel of individuals who live in hot geographic areas are selected and interviewed repeatedly over the course of time. Data collected can then be studied to derive meaningful insights.

Snowball sampling is used when volunteers for a particular study are either not available or not willing to participate. In this case, medical personnel collect data from available volunteers and then invite them to bring in more volunteers.

2. Educational Sector

Sampling techniques are used in educational research to study the characteristics of a select group of students, to project and generalize results on the larger population as a whole.

Educational research may be conducted to study the impact of the institution on students within the campus, the impact of geographic factors on students across the city, the impact of teaching methods across a sample of students, etc. Sampling techniques in research methodology are chosen based on the type of study.

Random and cluster sampling techniques are often used for this purpose, by selecting students at random from different classrooms and/or schools, or clustering students into groups by age or any other predefined factor and then selecting clusters at random for study.

Sampling for educational research is often conducted in multiple steps because of the different levels of base samples. The sample for selection of subsets has to be first defined, whether it is on a city level, school level, or classroom level (or a combination). Then the subset is defined by one of the sampling techniques.

Another use of sampling in the education field is in studying different teaching techniques and their impact. For this, a sample of teachers is chosen, and a sample of students if necessary. Random probability sampling is often the choice of sampling technique.

Sampling Techniques in Research Methodology

Sampling techniques in research methodology depend on the type of research and domain.

Choosing Probability Sampling Techniques

The advantages of choosing probability (e.g. random sampling techniques, stratified random sampling techniques, cluster, and systematic sampling techniques), sampling techniques in research are:

(i) Sampling is easy to conduct (although it can be time-consuming).

(ii) Chances of collecting a good sample are high.

(iii) It is beneficial for most research projects because of random data.

The disadvantages are:

(i) Identifying all members of a huge group can be difficult.

(ii) Data collection can be difficult due to the randomness of participants.

Choosing Non-Probability Sampling Techniques

In research, the advantages of choosing non-probability (quota sampling techniques, voluntary sampling) sampling techniques are:

(i) Cost and time-effective.

(ii) Useful when the initial sample pool is low.

(iii) Used for a qualitative, pilot, or exploratory study.

The disadvantages are:

(i) Since the sample is filtered, researchers are unable to confirm if the selected sample best represents the large population.

Sampling techniques are chosen once the purpose of the study is defined, and depending on the availability of a base pool of data or population.

Why Sampling is an Important Step in Research

The purpose of conducting research, of whatever kind and irrespective of domain, is to deduce some tangible results that can be used for positive action.

In an ideal scenario, this would mean interviewing and testing every single member of the population who matches the sample criteria. But assume the research is for the impact of prolonged cell phone usage.

The number of people who use cell phones for a prolonged time is extremely high, in the billions, and applying the ideal scenario of surveying and studying each one of them is impossible.

This is where sampling techniques help researchers. Being able to perform a study on a small subset of the population with the guarantee of near-perfect results saves an incredible amount of time, money, and the results impact the population positively.

You may also enroll in a Data Analytics Course for more lucrative career options in Data Analytics & know how to become a certified data analyst.